From Plain English to Enforceable Policy

Kyvvu’s focus is narrow by design: runtime governance of AI agents. Not prompt engineering, not access control, not model evaluation — runtime. We intercept every agent step before it executes, evaluate it against declared policy, and block or escalate violations in real time. Across any agent platform, across any number of agents running simultaneously.

The core abstraction is policies on paths. An agent execution is a sequence of steps — LLM calls, tool calls, data retrievals — and the safety of any individual step depends on what preceded it. That is where the risk lives, and that is where enforcement has to happen.

The Policy Specification Problem

Working closely with clients in insurance, banking, and healthcare, we run into the same situation repeatedly. Compliance teams can articulate constraints clearly in plain language:

“We should never send out customer information via email.” “An agent should not initiate a payment after reading data from an external source.” “No customer record should be retrieved unless the agent has first verified the user’s identity.”

Intuitive. Unambiguous. Everyone in the room nods.

Translating that into a formal, deterministic policy function is a different matter. Kyvvu policies are functions of execution paths — they evaluate the sequence of steps an agent has taken, not just the current action in isolation. That expressiveness is what makes them powerful. It is also what makes authoring them non-trivial.

We ship policy bundles for specific industries and specific legislation — GDPR, EU AI Act, sector-specific requirements — and those cover a lot of ground. But every client deployment has its own operational constraints that no pre-built bundle anticipates.

An Agent That Writes Policies

So we built an agent that does the translation.

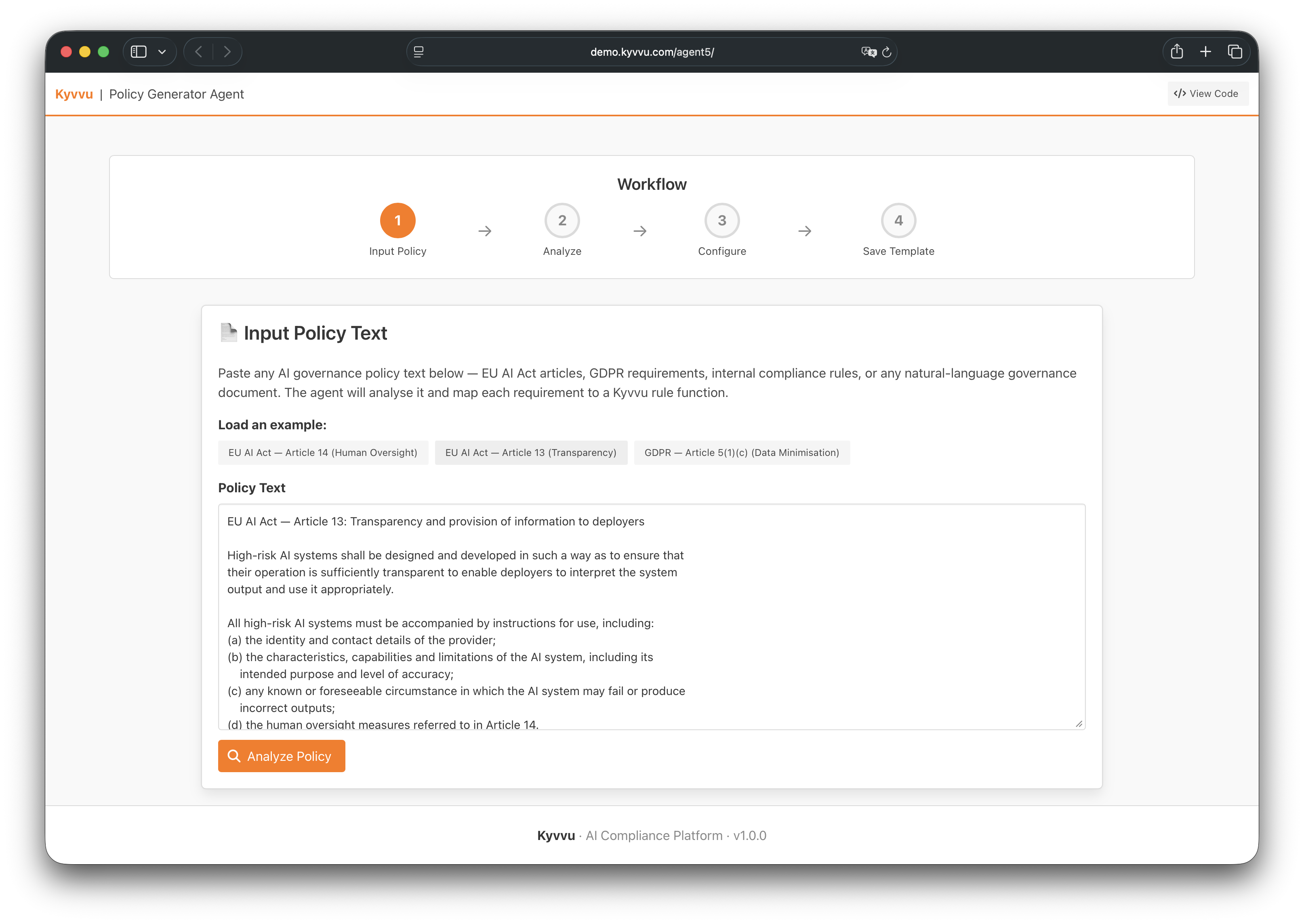

Paste any natural language governance text: EU AI Act articles, GDPR requirements, internal compliance rules.

Paste any natural language governance text: EU AI Act articles, GDPR requirements, internal compliance rules.

The Policy Generator Agent takes natural language governance text as input — anything from a regulatory article to an internal compliance note — analyzes it, and maps each requirement to concrete Kyvvu rule functions. The output is a YAML policy template, ready to load directly into Kyvvu.

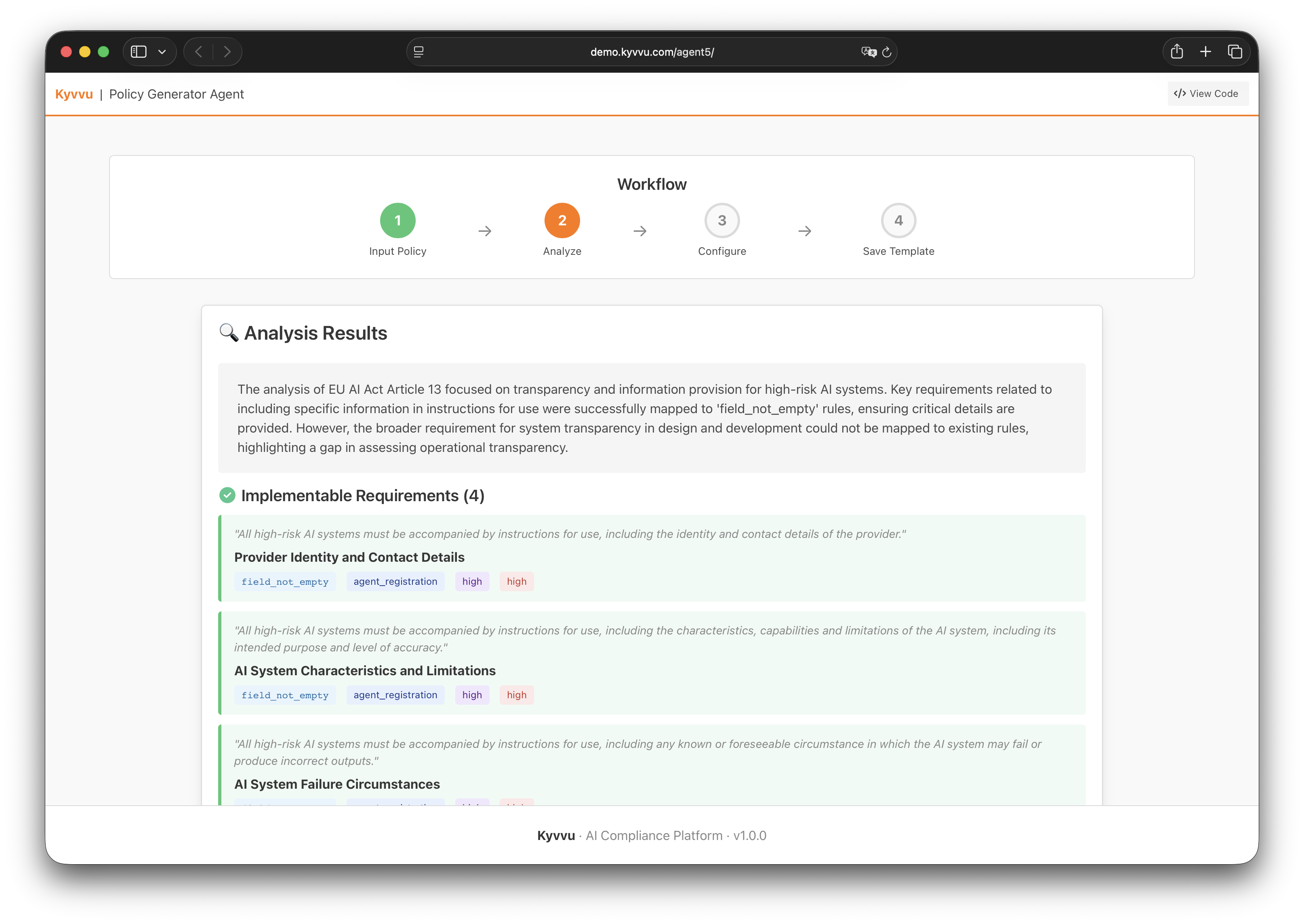

Each natural language requirement is mapped to an implementable rule function, with scope, severity, and risk classification.

Each natural language requirement is mapped to an implementable rule function, with scope, severity, and risk classification.

The translation works better than we expected. “We should never send out customer information via email” becomes:

node_cannot_be_preceded_by: {

"target_node_type": ["TOOL_CALL", "email", "POST"],

"preceding_node_type": ["TOOL_CALL", "crm", "GET"]

}

applied to any outbound email action. The constraint that was obvious in English is now deterministic, path-aware, and enforced at the infrastructure layer — not by asking the model to be careful.

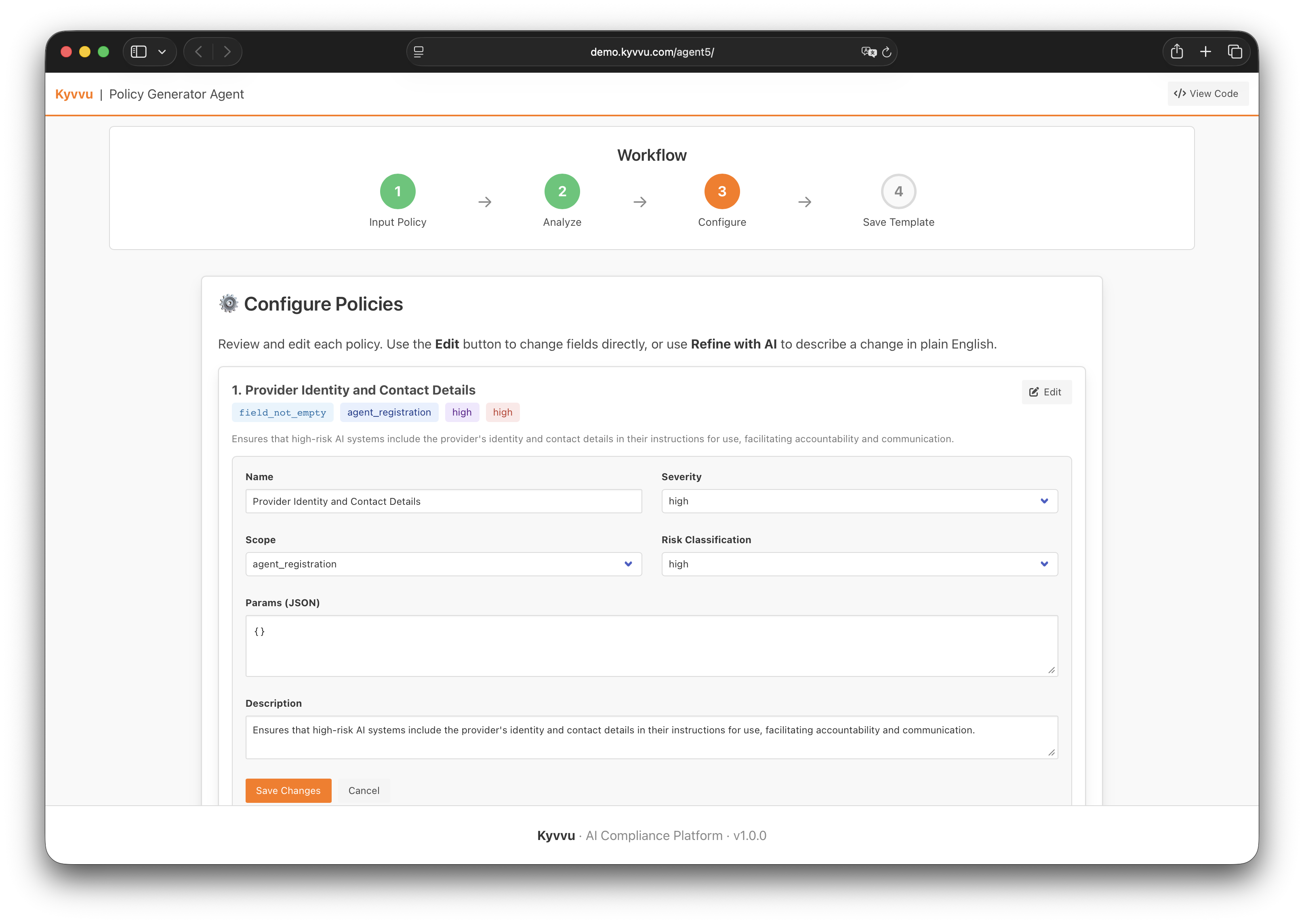

Each generated policy can be reviewed and refined — edit fields directly, or describe a change in plain English.

Each generated policy can be reviewed and refined — edit fields directly, or describe a change in plain English.

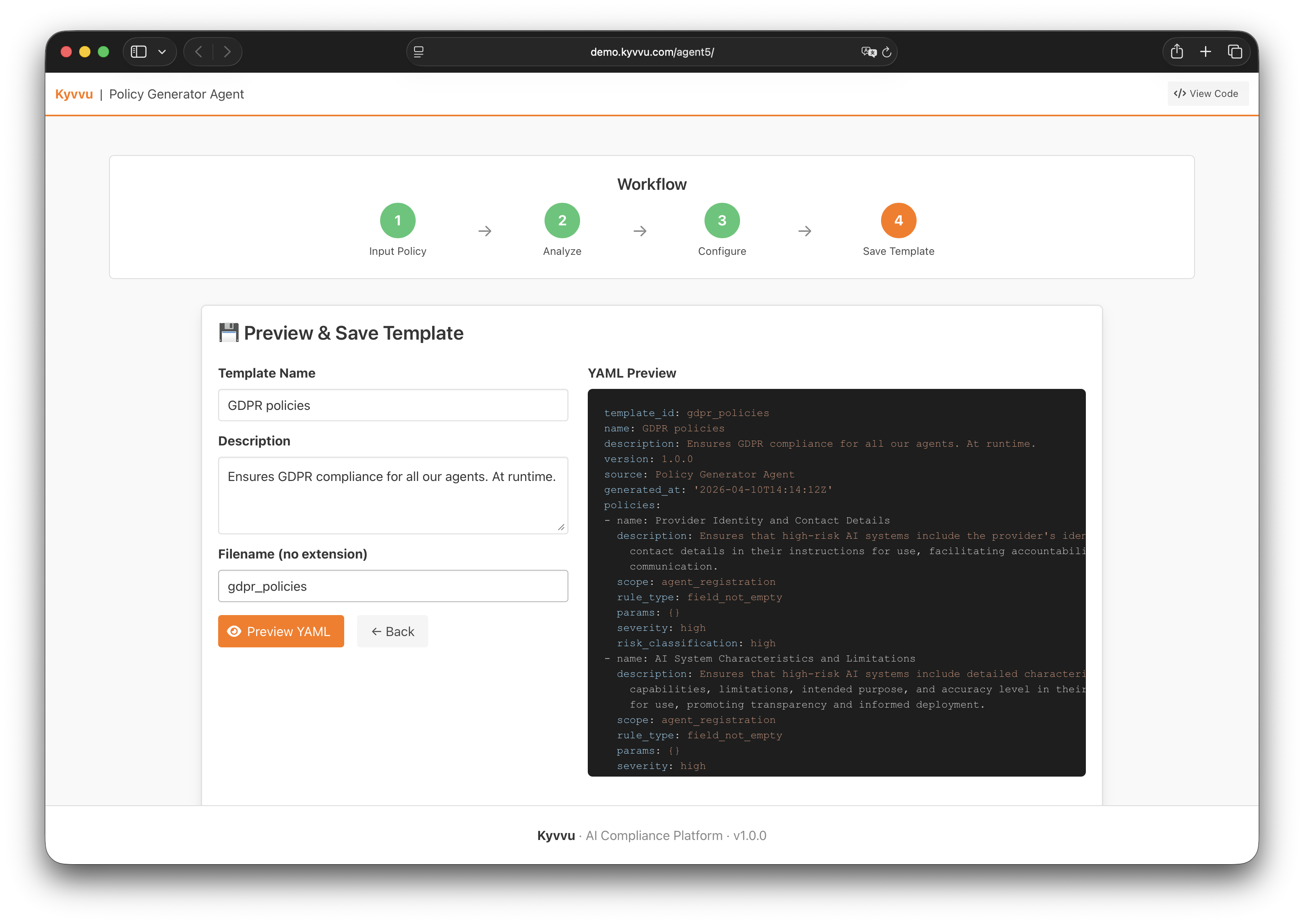

After analysis, every generated policy can be reviewed, adjusted, and saved as a named template. The full workflow runs in four steps: input → analyze → configure → save. The resulting YAML is versioned, auditable, and reusable across agent deployments.

The final output: a GDPR policy bundle generated from Article 13, ready to apply across your agent fleet.

The final output: a GDPR policy bundle generated from Article 13, ready to apply across your agent fleet.

What Doesn’t Change

The Policy Generator is a new capability, not a change in what Kyvvu is.

Enforcement still happens at runtime — at the step level, before actions complete, regardless of which agent platform is underneath. The audit trail is still hash-chained and forensically sound. Incident escalation still fires in real time. And policies are still functions of execution paths, not static keyword filters or model instructions.

What changes is how quickly a compliance team can go from a regulatory requirement or an internal constraint to something that actually runs.

If you’re deploying agents in a regulated environment and wrestling with the gap between what your governance team can describe and what your engineering team can implement, we’d like to talk.